The Curve Just Went Vertical

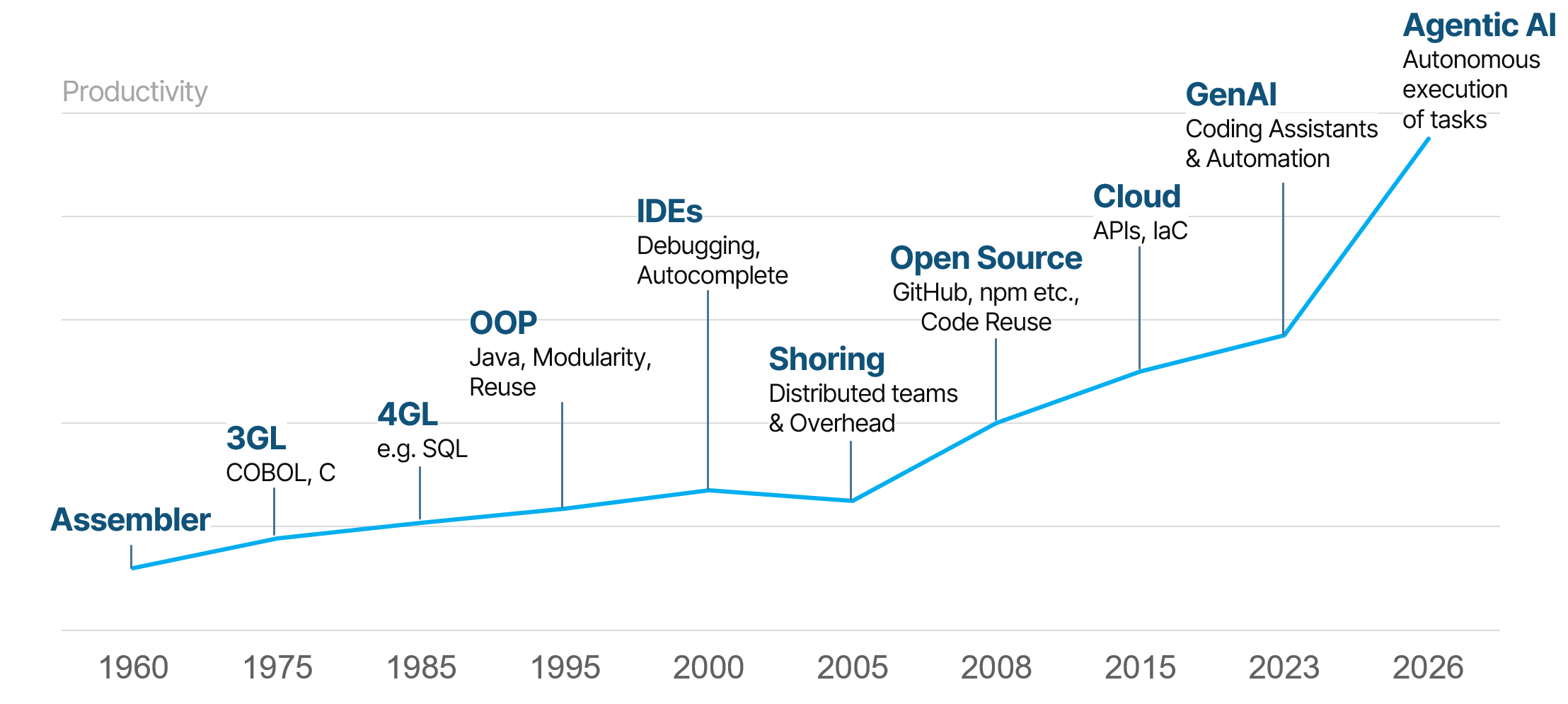

Every generation of software development tools has promised a productivity revolution. Assembler replaced machine code. COBOL and C abstracted away hardware. SQL, Java, IDEs, open source, cloud APIs – each wave raised the ceiling on what a development team could deliver. Each one mattered. None of them changed the fundamental contract between humans and machines: humans specify intent, machines execute instructions.

Agentic AI breaks that contract. Not gradually – abruptly. Tools like Claude Code don’t just autocomplete; they plan, execute, test, refactor, and document. They operate across entire codebases with a degree of autonomy that no previous tool has approached. The productivity timeline that took six decades to climb from Assembler to cloud has added more ground in the last 18 months than in the preceding decade.

The gains are measurable and already showing up in production. A C compiler of 100,000 lines of code – fully developed, including detailed tests, in two weeks, autonomously (Anthropic, February 2026). Security and dependency updates auto-generated with an 86.5% acceptance rate (AIDev/GitHub, January 2026). Legacy systems that previously required years of analysis migrated in quarters. These aren’t benchmarks from a research lab. They are outcomes from real projects.

But here is what the productivity charts don’t show: the failure modes. Agentic AI operating without the right human judgment layer doesn’t just slow down – it confidently goes in the wrong direction at speed. In enterprise software, where business logic is decades old, regulatory constraints are non-negotiable, and a wrong architectural decision can cost millions to unwind, that risk is not theoretical.

This is the core insight behind itestra’s AI Software Factory: the productivity leap is real, and capturing it in enterprise contexts requires embedding senior engineering judgment at every critical decision point. The human-in-the-loop isn’t a safety net. It’s the product!

Six Decades of Productivity Gains – and One Inflection Point

To understand why 2026 is different, it helps to look at what came before. Each era of development tooling delivered meaningful gains, but the underlying model stayed constant: human developers wrote code, and tools helped them write it faster or with fewer errors.

The transitions from 3GL to 4GL to object-oriented programming each delivered roughly a 2–3x improvement in developer output for the right problem types. IDEs added debugging, refactoring, and autocomplete – measurable gains in individual productivity. Open source and package ecosystems like GitHub and npm meant that large classes of solved problems no longer needed to be re-solved. Cloud infrastructure and Infrastructure-as-Code abstracted away entire operational disciplines.

There was one conspicuous exception to the upward trend: distributed development. Offshoring and nearshoring introduced coordination overhead that, in many organizations, partially offset the cost savings. The lesson was that raw labor arbitrage without quality control and knowledge alignment degrades output. It’s a lesson that applies equally to AI agents.

Generative AI – coding assistants like GitHub Copilot and early Claude – started showing up in earnest in 2023. The gains were real but bounded: faster boilerplate, better search, useful suggestions. Developers remained firmly in control of every line that entered production.

Agentic AI, arriving in 2025–2026, is categorically different. An agent doesn’t suggest a function – it implements a feature, writes the tests, runs them, fixes the failures, and documents the result. The human role shifts from writing to steering. That is a fundamentally different relationship with the machine, and it demands a fundamentally different delivery model.

What “Autonomous” Actually Means in Enterprise Contexts

The word “autonomous” lands differently in a greenfield startup context than it does in an enterprise environment running 30-year-old business logic on a mainframe. Enterprise software has properties that make unconstrained AI autonomy genuinely risky.

Business logic is irreplaceable and often undocumented. Decades of regulatory changes, product iterations, and edge-case handling are embedded in code that no one fully understands anymore. An AI agent that misreads the intent of a critical calculation and propagates that misreading across a refactored codebase creates a problem that is vastly harder to diagnose than the original technical debt.

Architectural decisions have long half-lives. A wrong choice about service boundaries, data models, or integration patterns made today will constrain development for years. These decisions require understanding not just the codebase, but the business direction, the regulatory environment, and the operational constraints – none of which live in the repository.

Compliance is non-negotiable and jurisdiction-specific. Financial institutions, insurers, and healthcare organizations operate under regulatory frameworks – DORA, GDPR, GxP, Solvency II – where data handling, auditability, and process documentation are legal requirements, not engineering preferences. An AI agent trained on public internet data and running on infrastructure outside EU jurisdiction is not a compliant tool regardless of its output quality.

Security surface area grows with autonomy. An AI agent with broad codebase access and execution rights is a significant attack surface. Without proper sandboxing, access controls, and review gates, the efficiency gains can be offset by the introduction of vulnerabilities that bypass standard review processes.

None of these constraints mean that Agentic AI cannot be deployed in enterprise environments. They mean that deploying it responsibly requires a deliberate architecture – for how the AI operates, where human judgment intervenes, and how compliance is maintained throughout.

The itestra AI Software Factory

itestra’s AI Software Factory is the operational answer to the enterprise challenge. It is not a set of AI tools bolted onto an existing delivery process – it is a rethought delivery model in which human and AI roles are explicitly defined for each phase of the software lifecycle.

The workflow runs from high-level requirements through planning, execution, verification, and deployment. At each stage, the model distinguishes clearly between what AI agents do autonomously, what they do with human oversight, and what humans own outright.

Planning and Architecture: Human Judgment, AI-Supported

Requirements analysis and architecture design remain human-led. Domain experts and senior engineers translate business intent into implementation plans, evaluate architectural variants, and make the decisions that will constrain everything downstream. AI agents support this phase – surfacing relevant patterns, analyzing existing system structures, generating documentation of current-state architecture – but they do not own the decisions.

This is deliberate. Architecture is where the cost of a wrong decision is highest and where domain knowledge – regulatory context, business direction, organizational constraints – is most critical. AI agents optimizing for code-level correctness without that context will produce technically coherent but strategically wrong outcomes.

Execution, Testing, Documentation: AI Agents in a Controlled Environment

Code generation, test writing, test execution, security reviews, dependency updates, and documentation generation are where AI agents operate with high autonomy. This is where the 3–10x efficiency gains materialize.

Agents run in sandboxed environments with defined access boundaries. They escalate proactively when they encounter ambiguity or decisions that exceed their defined scope. Senior engineers review outputs at structured checkpoints rather than line by line – a shift from manual execution to outcome verification.

The evidence from itestra’s own projects is concrete: a new feature in a production ERP system exceeding one million lines of code – developed autonomously including end-to-end tests in under two hours. UI tests for a new application process generated from legacy system screenshots in under two hours. Architecture documentation for a mainframe system of over one million lines generated in one hour. These are not proof-of-concept results; they come from live client engagements as of February 2026.

Compliance and Sovereignty: Enterprise-Grade Infrastructure

All AI models in the Software Factory run on AWS Bedrock with full EU data residency. No-training policies ensure that client code and data are never used to improve foundation models. Copyright protection for AI-generated output is contractually secured. These are not afterthoughts – they are design requirements that precede tool selection.

The senior engineer is a continuous presence throughout: not reviewing every line, but setting the guardrails, validating the outcomes, and making the calls that require business and regulatory judgment. That continuous human presence is what makes the autonomy of the AI agents safe to deploy in production enterprise contexts.

The Numbers From Production

The case for senior-guided Agentic AI doesn’t rest on projections. It rests on outcomes already observed in 2025–2026, both from itestra’s client work and from the broader industry:

- ERP system, >1M LoC: New feature developed autonomously including end-to-end tests in under 2 hours (itestra, February 2026)

- New application process: UI tests autonomously generated from legacy system screenshots in under 2 hours (itestra, February 2026)

- Mainframe system, >1M LoC: Complete architecture documentation generated in 1 hour (itestra, February 2026)

- C compiler, 100k LoC: Fully developed autonomously including detailed tests in 2 weeks (Anthropic, February 2026)

- Legacy system migration: Complex analysis and migration completed in quarters instead of years (Anthropic, February 2026)

- Security and dependency updates: Auto-generated with an 86.5% acceptance rate (AIDev/GitHub, January 2026)

The 3–10x efficiency figure is not a marketing claim. It reflects the range observed across different task types: analysis and documentation tasks tend toward the higher end of that range; complex feature development with significant architectural judgment required falls toward the lower end. The variability itself is informative – it points to exactly where human expertise matters most.

Where to Start

For enterprise organizations evaluating Agentic AI, the right question is not “which tools should we adopt?” It is “where in our delivery pipeline does human judgment matter most, and how do we build a model that preserves that judgment while capturing the efficiency gains?”

That requires an honest assessment of the current state: the architecture of existing systems, the documentation gaps, the regulatory constraints, and the skill profile of the engineering team. It also requires clarity about what “autonomous” means in your specific context – which decisions can be delegated to an agent and which cannot.

itestra’s entry point is a targeted AI Readiness Assessment – typically completed in one to two weeks – that maps the current software landscape, identifies where Agentic AI can deliver the highest immediate value, and defines the governance model required to deploy it responsibly. The output is a concrete roadmap, not a generic recommendation.

The productivity gains from Agentic AI are available to enterprises that approach them with the same rigor they apply to any other significant architectural decision. The tools are ready. The question is whether the judgment layer is in place to use them well.

How itestra Deploys Agentic AI in Enterprise Software

Ai · Software Transformation · Legacy Modernization